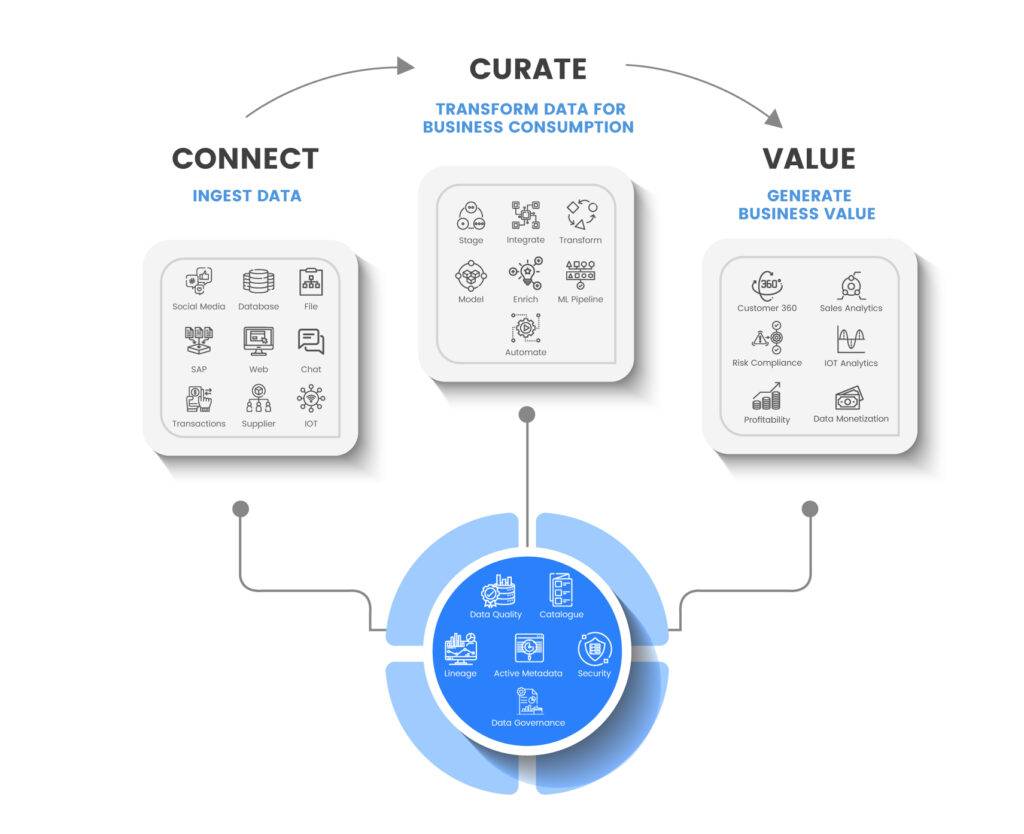

Data Fabric represents cutting-edge technology that possesses the capability to transform the way organizations approach their data management. This state-of-the-art architecture allows for effortless connection and integration of heterogeneous data sources and systems, resulting in a unified and coherent view of data. As a result, managing, analyzing, and utilizing data in real-time becomes simpler and more efficient.

In today’s data-driven economy, the value of data lies in its context, accessibility, and actionability for business users. However, many businesses still struggle to operationalize their company data, facing growing complexity in storage, analysis, and retrieval. Along with traditional CRM and ERP data, modern businesses need to leverage data from diverse sources, such as clickstream and sensor data, and real-time customer insights from various systems.

To tackle these challenges, businesses need better visibility into their scattered data assets across diverse infrastructure types. Complex data environments with multiple vendors, clouds, and dynamic data prolong data preparation and require users with advanced metadata management skills.

To address hybrid data landscape difficulties, the data fabric architecture has emerged as a solution. Data Fabric is a converged platform that supports diverse data management requirements, ensuring appropriate IT service levels across all infrastructure and data sources.

It is a unified framework for data management, transfer, and security across disparate data center deployments. With data fabric, businesses can invest in infrastructure services that meet their needs without worrying about data availability, confidentiality, or integrity. This technology ensures that data is always ready for analysis, providing businesses with actionable insights to improve their operations. That is why it is also known as All in one Data management platform.

Advantages of Investing in a Robust Data Fabric Architecture

A proficient Data Fabric architecture provides several advantages, including:

- Automated data discovery for improved accessibility: Automated data discovery enables data democratization, making it easy for business users to access the information they need. Data fabric simplifies this process, providing a structured data environment that automates getting data from source systems, filtering it, and making it available to the final business user. Read more about How SCIKIQ approaches Data discovery.

- Faster time-to-value for advanced analytics: With data fabric, the time it takes to move from data to advanced analytics is significantly reduced. This automation and simplification dramatically shorten the time it takes to generate value. Know how Advanced analytics and AI are changing the Data management process.

- Enterprise-grade security for better value: Data security is a top priority, and data fabric provides robust protection for your most valuable asset. It has a proven track record of keeping data safe and ensuring compliance with regulations.

- Adaptable data architecture for increased productivity: A data fabric Architecture helps build a system where all your data can be accessed from different points, making your organization more productive and efficient. Explore how simple and powerful it is. https://scikiq.com/blog/scikiq-innovative-data-fabric-architecture/

- Industry-specific knowledge graphs and data models for insightful research: A robust data fabric enables intelligent examination of data by integrating it with established industry standards. This approach generates a reliable formula for generating ROI for your company, rather than a haphazard mash-up of disparate data sets.

Data Management Capabilities of Data Fabric

Data Fabric offers a comprehensive suite of data management capabilities that help businesses extract maximum value from their data assets. In this subsection, we will explore some of the data management capabilities of Data Fabric, including data integration, governance, cataloging, data lake, data curation, and real-time analytics.

Data Integration

An efficient and reliable data integration foundation is crucial for a successful data fabric architecture. To ensure seamless synchronization with multiple delivery styles, the data fabric should support various integration methods such as streaming, replication, messaging, data virtualization, and micro-services. Specialists with complex integration needs and business users who require self-service data preparation should both be able to easily utilize the data fabric.

The data fabric’s AI-powered intelligence helps discover valuable data sources by analyzing metadata from internal analytics, saving up to 70 percent of data administration costs.

Intelligent data integration plays a vital role in the data fabric’s success by considering all data and infrastructure environments during planning, implementation, and use. The data fabric should automatically create data flows and pipelines across data silos, correct schema drift, optimize task distribution, and automatically import new data assets within established guidelines. By remaining future-proof and not tied to any specific platform or set of programs, the data fabric architecture ensures that it can adapt to the changing needs of businesses in the future.

How intelligent data integration helps a data fabric

- All data and infrastructural environments are considered throughout the planning, implementation, and use.

- Create data flows and pipelines automatically across data silos.

- Schema drift correction and optimal task distribution

- The ability to automatically import new data assets within established guidelines

- The architecture is future-proof and not tied to any specific platform or set of programs

Data Governance

Data governance plays a crucial role in ensuring that a data fabric operates efficiently and effectively. The data fabric provides a unified view of an organization’s data, but without proper data governance, this data may be unreliable, inconsistent, or even insecure. With data governance practices in place, businesses can rest assured that their data is safe, reliable, verifiable, documented, and managed.

Effective data governance ensures that data is properly labeled, tagged, and classified, making it easier for business users to discover and access the information they need. This improves decision-making and operational support, leading to better data analytics and insights. Additionally, data governance helps to prevent data from being inconsistent or incorrect, which can harm the integrity of a company and lead to poor decision-making and other difficulties.

Compliance is also a critical aspect of data governance for data fabrics. By adhering to regulatory requirements, businesses can reduce operational expenses and avoid legal issues. Data governance ensures that data is properly managed, protected, and audited, which helps businesses to meet and exceed all applicable standards.

Effective data governance is an essential component of a well-functioning data fabric. It ensures that data is accurate, reliable, and secure, allowing businesses to make better decisions and gain valuable insights from their data. By implementing robust data governance practices, organizations can maximize the value of their data fabric and drive growth and success for their business.

Also Read Part of Data Governance: Understanding Data Lineage: What It Is and Why It Matters

Data Lake

Data lakes are massive storage spaces that contain duplicates of raw data gathered from multiple systems, often numbering in the thousands. However, data fabrics aren’t limited to transferring data across the company. In fact, if the current storage is adequate for analytical purposes, data can remain there and still be easily accessed using the same computational resources.

Data fabrics ensure that data is not only accurate but also deciphered for its significance. Rather than simply replicating unknown data sources, data fabrics transform them into a well-defined, verified data set.

Data warehouses and data marts are typically used for reporting and analytics. They store structured data that has been cleaned and organized. Data lakes, on the other hand, store all sorts of data, including structured, semi-structured, and unstructured data. This data is often raw and unprocessed.

In order to use data lakes for reporting and analytics, it is often necessary to integrate them with data warehouses and data marts. This can be a complex and time-consuming process. Only a select group of data scientists and engineers typically have access to the data lake, and they are responsible for preparing and exporting the data for use in other systems.

Data fabrics can simplify the integration of data lakes with other systems. They provide a uniform service for integrating data from various sources, and they can help to improve data quality and governance. This can make it easier for more users to access the data in the data lake, and it can also help to improve the insights that can be gained from the data.

In addition to providing a uniform service for integrating data, data fabrics can also provide a sophisticated system for analyzing and using data. This includes features such as data lineage, data quality management, and data discovery. These features can help users to understand the data in the data lake, and they can also help to identify and correct data quality issues.

Data Catalog

In the world of data management, a data catalog serves as the backbone of a data fabric. It is responsible for the identification, collection, and analysis of all technical, business, operational, and social metadata. It helps users to find, understand, and use data more effectively. A data catalog typically includes information about the data’s source, format, schema, lineage, and quality. It can also include business context, such as the meaning of the data and how it is used.

A contemporary data catalog has a broader scope that includes a wide range of information assets that end-users may use. These assets include business intelligence reports, domains, metrics, terminology, and functional business processes. As such, a data catalog is an essential component of an effective data governance strategy, which is crucial for ensuring a reliable data fabric.

It’s worth noting that a data fabric cannot exist without a data catalog. As top-level executives increasingly recognize the significance of a robust database, choosing the right data catalog is becoming more crucial for businesses. An excellent data catalog should provide resources for data exploration and understanding to data analysts, scientists, and the general public. Additionally, it should be able to recognize usage patterns, which allows it to offer better assistance to users.

The functions of a data catalog include “crawling” through corporate repositories to search for data sources, collecting metadata about data sources’ operations, and storing it in a database. It also uses machine learning techniques to automatically assign metadata tags to datasets. Furthermore, it’s a place where people can catalog, rank, and discuss datasets, offering a search index that takes context into account, making it easier to find information quickly.

Data Curation

Data is essential to any company, but it’s only useful if it’s put to good use. Companies have a lot of information, but they need to use it wisely to make smart decisions. Analysts and decision-makers need to know which data to use to guide the company. With so much data, finding patterns can be overwhelming. Data curation makes it easier by organizing and consolidating all the data in an organization, making it more valuable.

Data fabric helps with data curation by combining automated and manual procedures to connect data from different applications. This helps businesses find new connections and insights. Curated data can benefit companies by helping them use their data efficiently while meeting regulatory and security requirements. It’s a critical component of any successful data strategy.

Read more about Data Prep Studio by SCIKIQ and how it is changing Data preparation with the help of AI. Also, Explore more about how SCIKIQ Approaches the overall Data curation process.

Data curation and consolidation are made easier with the help of data fabric, which employs a combination of automated and manual procedures in its operation. It constantly looks for and connects data from different applications to find new connections that can be used for business.

Knowledge Graphs

A knowledge graph is a system that is built by combining data from various sources, which may have different structures and formats. The knowledge graph is made up of three critical components: schemas, identities, and context. Schemas provide the structure for the graph, identities classify the nodes, and context determines how the information should be used. By employing machine learning techniques and natural language processing, a knowledge graph can generate a comprehensive understanding of the nodes, edges, and labels, enabling it to comprehend relationships between various objects in the data. This makes it easier for the knowledge graph to answer questions and provide insights.

Knowledge graphs have applications in several industries, such as retail, finance, entertainment, and healthcare. In retail, knowledge graphs can be used to analyze consumer behavior and recommend products, while in finance, they can aid in anti-money laundering activities and the Know-Your-Customer (KYC) process. In entertainment, knowledge graphs can recommend new content based on viewing patterns, and in healthcare, they can categorize medical research. Explore a few more use cases of the knowledge graphs in Data management.

The semantic layer of the knowledge graph makes it more intuitive and easier to understand, providing valuable insights for data and analytics (D&A) executives. By employing integration standards and technologies, data integration specialists and data engineers can make it easier to access and deliver information from the knowledge graph. By adopting a knowledge graph, D&A executives can create commercial value from their data, but it is important to ensure that the adoption process is seamless to avoid delays.

Data fabric uses knowledge graphs to connect and integrate data from various sources, allowing businesses to gain a comprehensive understanding of their data.

By leveraging machine learning techniques, the knowledge graph can recognize relationships between data points and provide a complete picture of the data. This helps businesses to make informed decisions, improve efficiency, and create value from their data assets.

Active metadata management

Contextual information is the building block upon which a dynamic data fabric design is constructed, and the data fabric gathers and analyzes all types of metadata.

Data fabric helps in active metadata management as its sources help in sourcing information from multiple sources. There should be a method that enables data fabric to recognize, link, and analyze all different types of metadata. These types of metadata include technical, commercial, operational, and social metadata.

Data fabric utilizes active metadata by gathering and analyzing metadata from various sources, turning passive metadata into active metadata, and using important metrics to train AI and machine learning algorithms. Data fabric recognizes, links, and analyzes different types of metadata to create a graphical model that is easy to understand based on how the organization’s relationships work. This enables businesses to make better predictions about managing and integrating data, leading to improved decision-making and operational efficiency.

To achieve frictionless data exchange, the data fabric must turn passive information into active metadata. Additionally, businesses must focus on active metadata management. For this to take place, the data fabric has to:

- Analyzes the information as it comes in to find the most critical metrics and statistics. After that, a graphical model is built.

- Make an image of the metadata, so it is easy to understand based on how the company’s relationships work.

- Use important metadata metrics so that AI and machine learning algorithms can learn over time and make better predictions about managing and integrating data.

Explore more here. Active Metadata: Simplifying Data Management and Analysis

Embedded machine learning (ML)

The traditional method for analyzing data was based on trial and error, a strategy that cannot be used when the data sets being analyzed are vast and diverse. The process of evaluating large amounts of data may be simplified with the help of machine learning. Embedded machine learning can give accurate results and analysis because it uses data to create algorithms and models that work quickly and accurately for processing data in real time.

As more of the analog world becomes digital, our ability to learn from data by developing and testing algorithms will become increasingly important for what are now considered standard business models.

In a world where artificial intelligence is embedded in products, differentiation will come from creating sophisticated data supply chains that can identify, convert, and transfer data to where it is required. The data that is utilized gives AI and ML their strength, regardless of whether or not they are productized. Businesses that buy AI and ML solutions that help them find and use their data faster and for less money will make those products work better.

An efficiently managed data supply chain fuels many more proofs of concept, which can be carried out much more quickly and at a lower cost. Putting products that have embedded machine learning into production will result in lower prices and higher levels of reliability.

Because data is necessary for creating and implementing machine learning models, organizations need to figure out how to make sense of the available information. AI and ML allow businesses to quickly and safely process, transform, protect, and organize data from many different sources.

Real-time Data Analytics

In the modern digital age, companies must contend with rising levels of competition and constrained amounts of time. Everyone needs real-time analytics for actionable information to be available at their fingertips. It could be someone who works for a SaaS firm that wants to introduce a new product by the end of the week or a retail shop employee who manages inventory and wants to handle supply concerns before the end of the day. In situations like these, where you need to make a choice right away, having access to real-time information may be helpful.

When given on time, these insights could help organizations quickly evaluate data and decide what to do with it. The term “real-time analytics“ refers to collecting “real-time” data from various sources, then analyzing that data and transforming it into a comprehensible format for the targeted consumers. It allows customers to draw conclusions or get insights after data is entered into a company’s system.

With data fabric, you can be sure that the facts you use to make decisions have been thoroughly researched and put together in real-time from different sources.

Data profiling

Data profiling examines, evaluates, and synthesizes data into meaningful summaries. The procedure generates a high-level overview that facilitates the identification of problems, threats, and trends related to data quality. Companies can significantly benefit from the insights gained through data profiling.

Gartner Defines, data profiling as the process of evaluating the reliability and accuracy of information. Dataset properties such as mean, minimum, maximum, percentile, and frequency may be detected by analytical algorithms for in-depth analysis. The system then runs studies to unearth metadata such as frequency distributions, essential connections, foreign key candidates, and functional dependencies. It then compiles this data to show you how well each element meets the criteria of your company’s objectives.

Data profiling helps to clean up consumer databases by identifying and removing duplicate records and other typical sources of mistakes. Null values (unknown or missing values), unwanted values, values outside of the expected range, patterns that don’t match what was expected, and missing patterns are all examples of these mistakes. With a robust data fabric design, you can combine data from different sources to improve data profiling.

Data Orchestration

Data orchestration’s job is to combine disparate data stores, clean them up, and make them accessible to data analysis programs. Businesses may automate and expedite data-driven decision-making with the help of data orchestration.

Data orchestration software establishes these connections between your various storage systems, allowing your data analysis tools quick and easy access to the appropriate storage system at any time. No additional storage is provided by the systems that perform data orchestration. Instead, they are a new data technology that can help eliminate data silos where others have failed. To effectively manage metadata, data orchestration is a crucial part of the data fabric.

Explore How Data orchestration is helpful in the overall Data management process.

Data visualization

Data visualization represents data in a visual format (such as a map or graph) that facilitates comprehension and insight-gathering. Data visualization’s primary objective is to facilitate the discovery of hidden relationships and anomalies in massive datasets.

Data visualization allows for the efficient and immediate transmission of information utilizing graphical representation. Also, by using this method, businesses may learn what influences customers’ buying decisions, focus on the regions that need it the most, make data more memorable for key audiences, determine the best times and locations to introduce new items and anticipate sales volumes.

How SCIKIQ has transformed Data visualization. Learn here.

Conclusion

The data fabric Architecture is a complex and ambitious concept that won’t be ready for prime time anytime soon. However, this design pattern should guide and direct your choice of technologies as you plot your route. By ensuring that all components of the data fabric can consume and share information, the groundwork could be laid for a flexible and highly autonomous data service that can handle a wide range of data and analytics use cases.

Get all benefits of Data Fabric Architecture when you Leverage SCIKIQ as your data management platform.

Explore more about what we do best

SCIKIQ Data Lineage Solutions: Data Lineage steps beyond the limitations of traditional tools.

SCIKIQ Data Visualization: Transforming BI with Innovative Reporting and Visualization

SCIKIQ Data curation: AI in Action with Data Prep Studio

Automating Data Governance: A game changer for efficient data management & great Data Governance.

14 Comments

The point of view of your article has taught me a lot, and I already know how to improve the paper on gate.oi, thank you.

The point of view of your article has taught me a lot, and I already know how to improve the paper on gate.oi, thank you.

Thanks for sharing. I read many of your blog posts, cool, your blog is very good.

At the beginning, I was still puzzled. Since I read your article, I have been very impressed. It has provided a lot of innovative ideas for my thesis related to gate.io. Thank u. But I still have some doubts, can you help me? Thanks.

Your point of view caught my eye and was very interesting. Thanks. I have a question for you.

Your article gave me a lot of inspiration, I hope you can explain your point of view in more detail, because I have some doubts, thank you.

Your article gave me a lot of inspiration, I hope you can explain your point of view in more detail, because I have some doubts, thank you.